diff --git a/README.md b/README.md

index d13f6b2b8..d6db25296 100644

--- a/README.md

+++ b/README.md

@@ -5,7 +5,7 @@

https://github.com/hodlen/PowerInfer/assets/34213478/b782ccc8-0a2a-42b6-a6aa-07b2224a66f7

-The demo running environment consists of a single 4090 GPU, the model is Falcon (ReLU)-40B, and the precision is FP16.

+The demo is running with a single 24G 4090 GPU, the model is Falcon (ReLU)-40B, and the precision is FP16.

---

## Abstract

@@ -82,9 +82,9 @@ cmake --build build --config Release

| Base Model | GGUF Format Link | Original Model |

|------------|------------------|----------------|

-| LLaMA(ReLU)-2-7B | [PowerInfer/ReluLLaMA-13B-PowerInfer-GGUF](https://huggingface.co/PowerInfer/ReluLLaMA-13B-PowerInfer-GGUF) | [SparseLLM/ReluLLaMA-7B](https://huggingface.co/SparseLLM/ReluLLaMA-7B) |

+| LLaMA(ReLU)-2-7B | [PowerInfer/ReluLLaMA-7B-PowerInfer-GGUF](https://huggingface.co/PowerInfer/ReluLLaMA-13B-PowerInfer-GGUF) | [SparseLLM/ReluLLaMA-7B](https://huggingface.co/SparseLLM/ReluLLaMA-7B) |

| LLaMA(ReLU)-2-13B | [PowerInfer/ReluLLaMA-13B-PowerInfer-GGUF](https://huggingface.co/PowerInfer/ReluLLaMA-13B-PowerInfer-GGUF) | [SparseLLM/ReluLLaMA-13B](https://huggingface.co/SparseLLM/ReluLLaMA-13B) |

-| Falcon(ReLU)-40B | [PowerInfer/ReluLLaMA-13B-PowerInfer-GGUF](https://huggingface.co/PowerInfer/ReluLLaMA-13B-PowerInfer-GGUF) | [SparseLLM/ReluFalcon-40B](https://huggingface.co/SparseLLM/ReluFalcon-40B) |

+| Falcon(ReLU)-40B | [PowerInfer/ReluFalcon-40B-PowerInfer-GGUF](https://huggingface.co/PowerInfer/ReluLLaMA-13B-PowerInfer-GGUF) | [SparseLLM/ReluFalcon-40B](https://huggingface.co/SparseLLM/ReluFalcon-40B) |

## Inference

- If you just have CPU:

@@ -107,11 +107,11 @@ Then, you can use the following instruction to run PowerInfer with GPU index:

## Evaluation

- +

-

+

- +

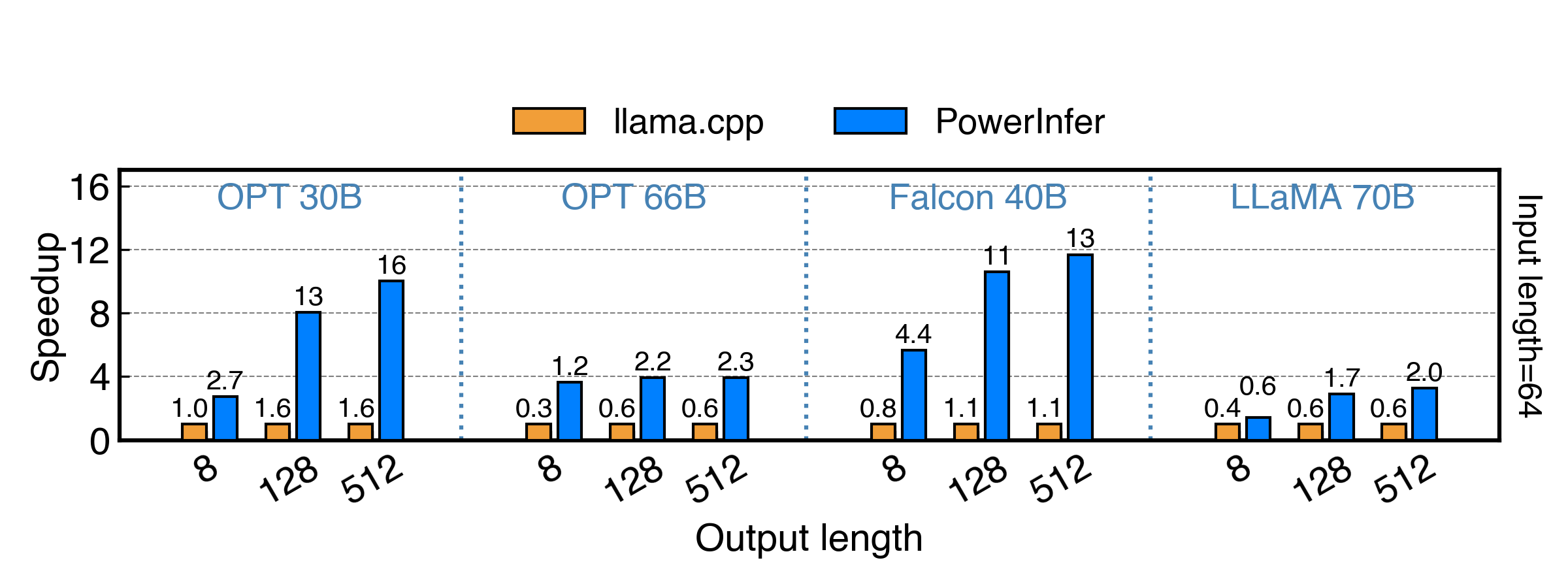

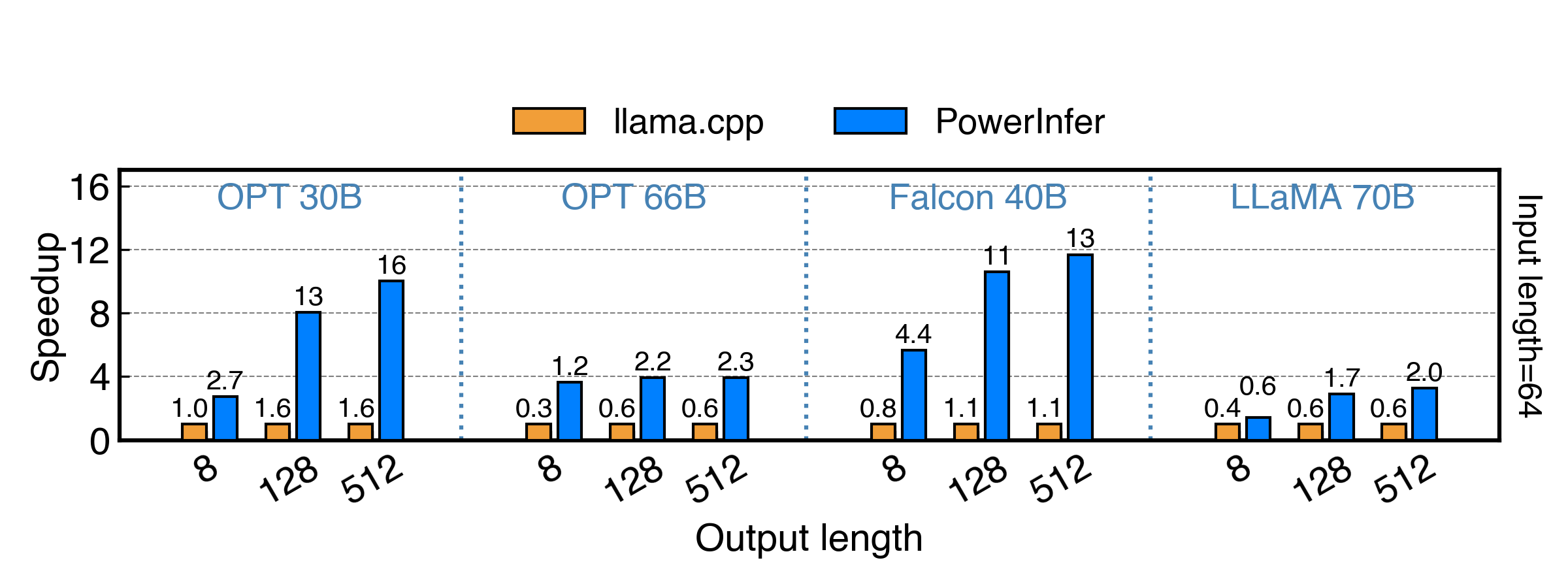

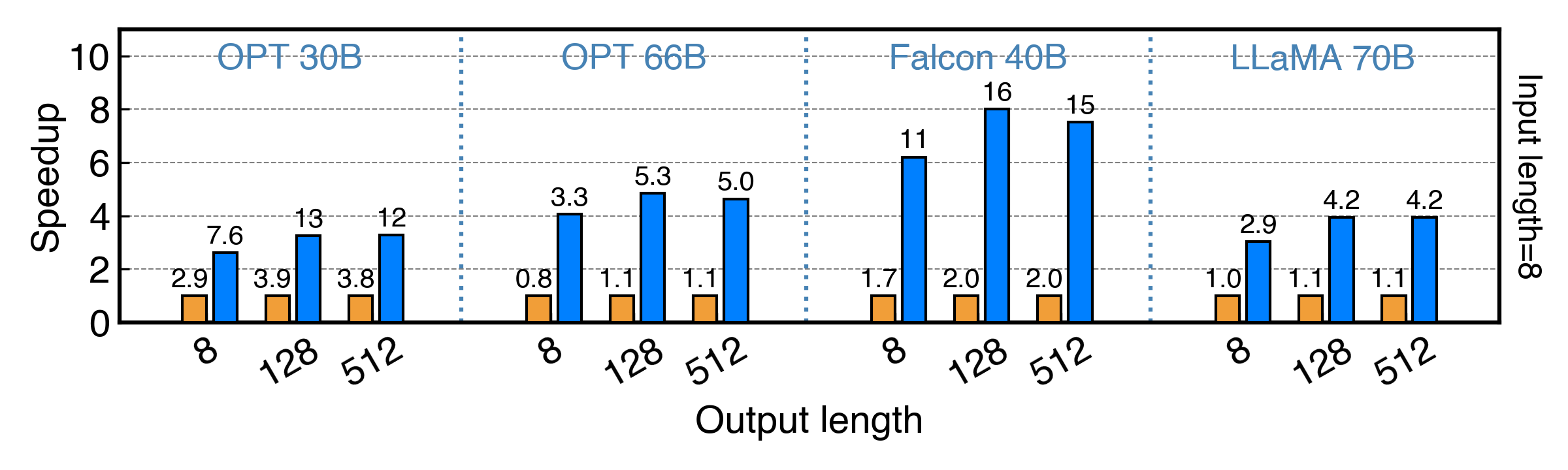

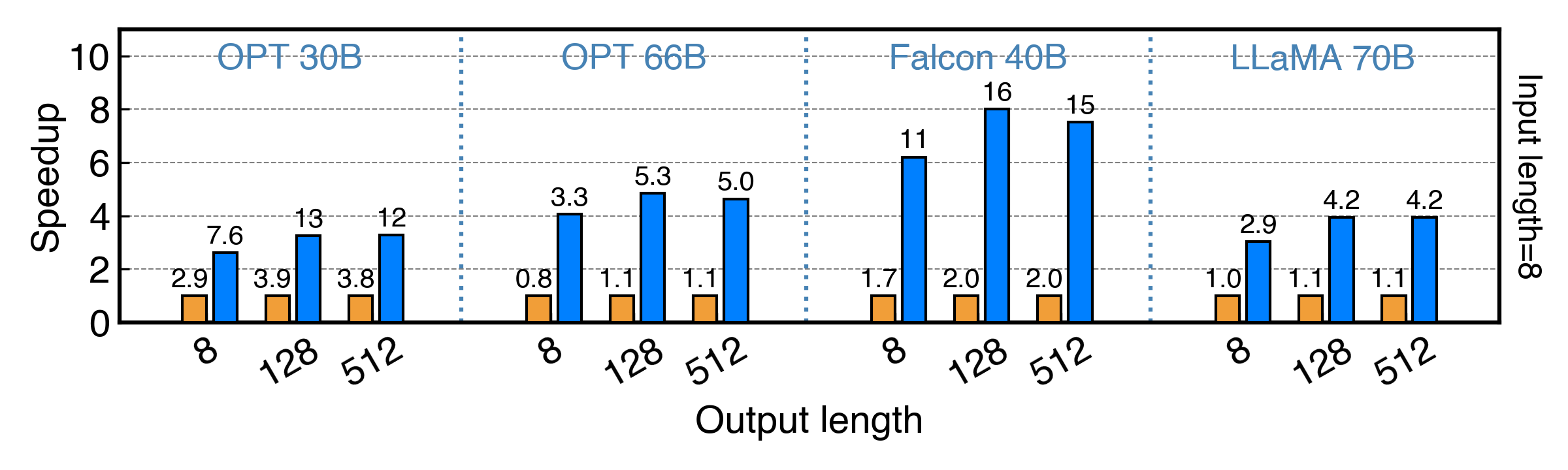

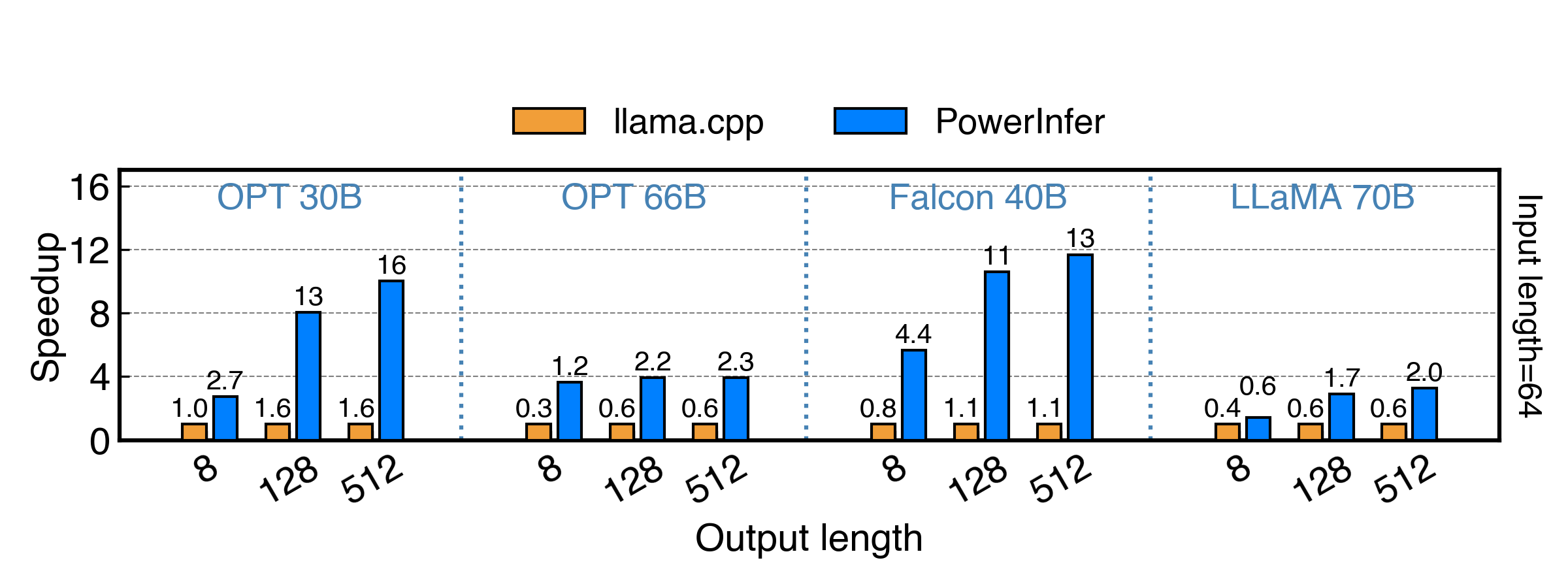

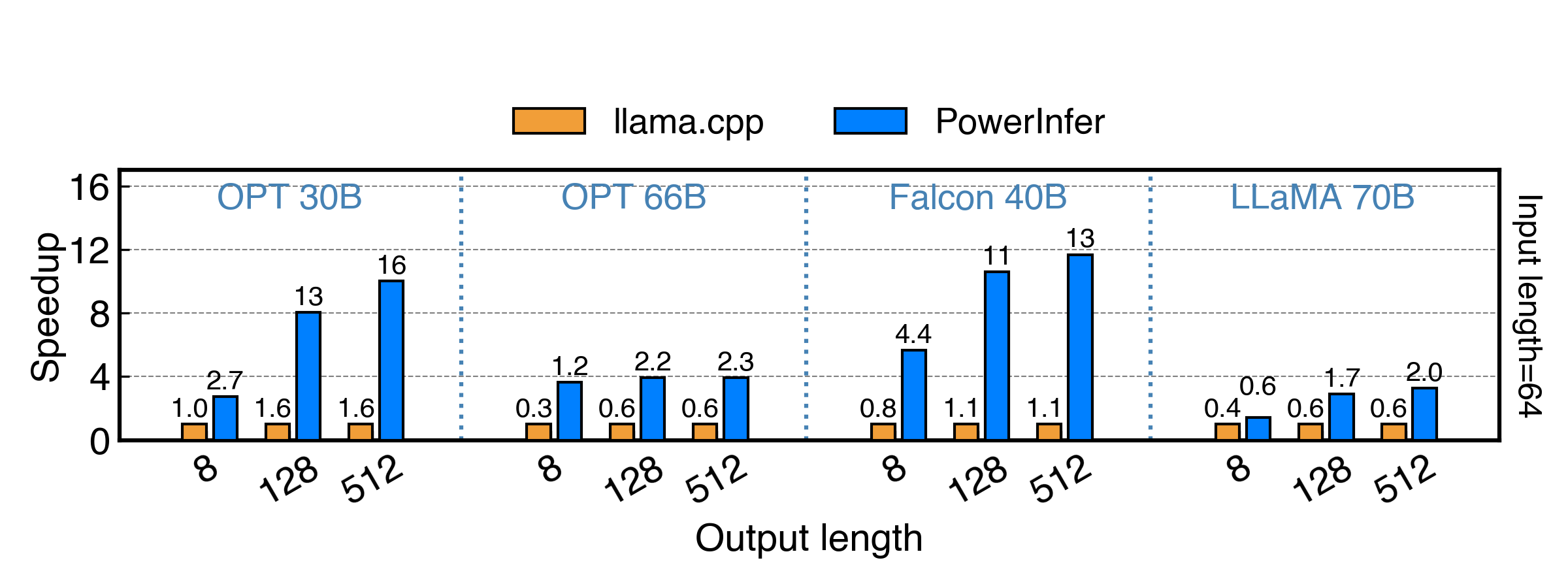

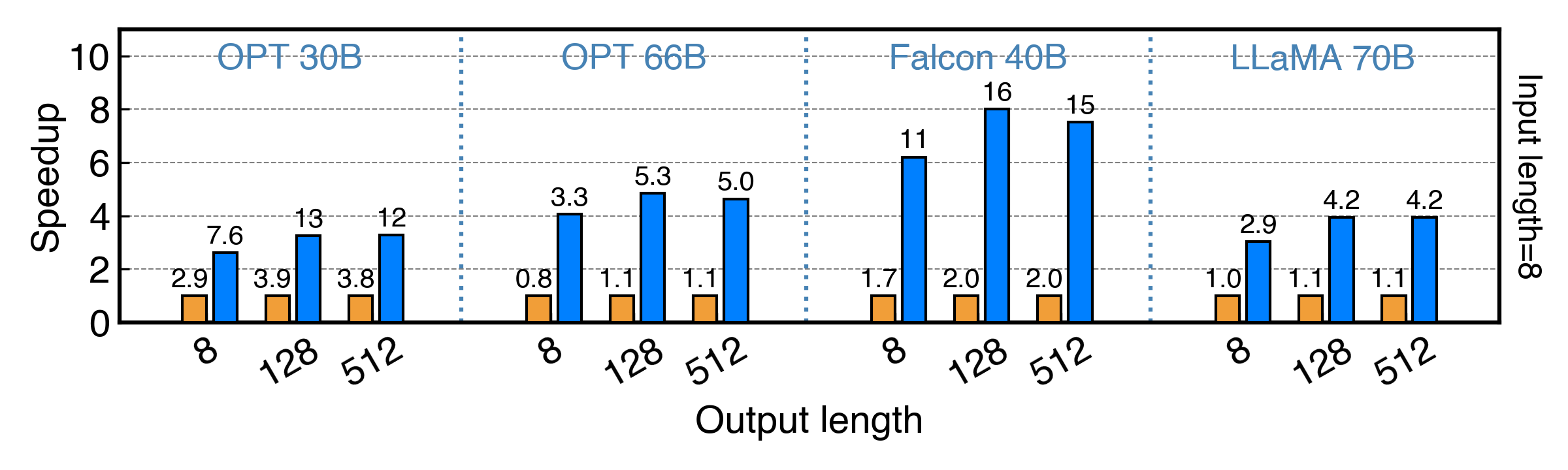

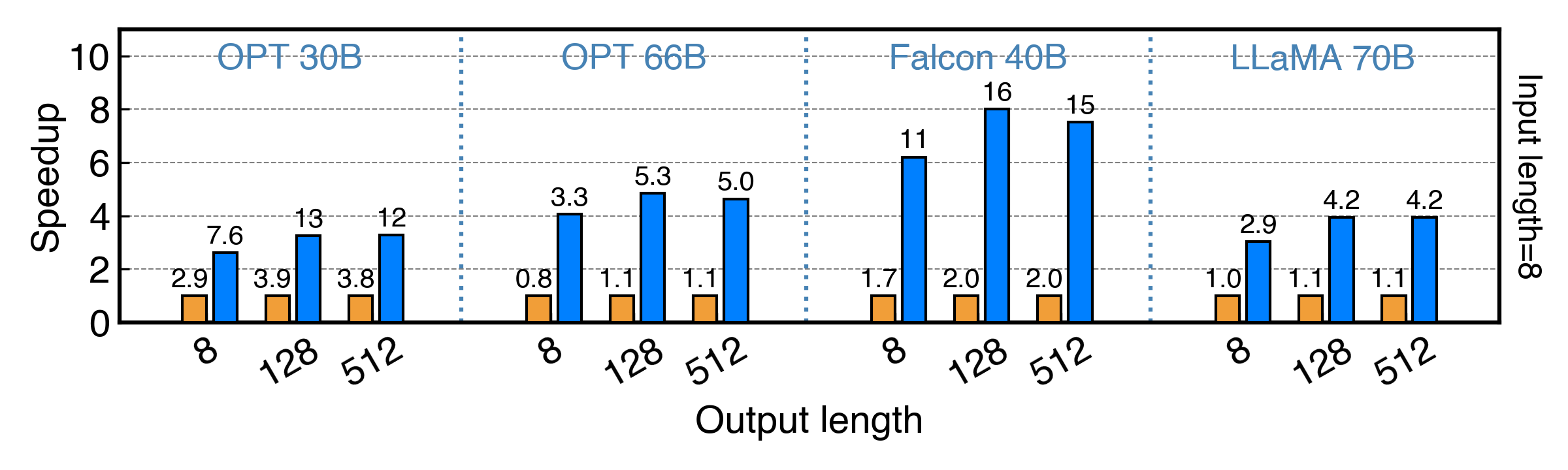

-PowerInfer achieves up to 11x and 8x speedup for fp16 and int4 model!

+PowerInfer achieves up to 11x and 8x speedup for FP16 and INT4 model!

## TODOs

We will release the code and data in the following order, please stay tuned!

@@ -130,8 +130,8 @@ We will release the code and data in the following order, please stay tuned!

If you find PowerInfer useful or relevant to your project and research, please kindly cite our paper:

```bibtex

-Stay Tune

+Stay tuned!

```

## Acknowledgement

-We are thankful for the easily modifiable operator library [ggml](https://github.com/ggerganov/ggml) and execution runtime provided by [llama.cpp](https://github.com/ggerganov/llama.cpp). We also extend our gratitude to [THUNLP](https://nlp.csai.tsinghua.edu.cn/) for their support of ReLU-based sparse models. We also appreciate the research of [DejaVu](https://proceedings.mlr.press/v202/liu23am.html), which inspires PowerInfer.

\ No newline at end of file

+We are thankful for the easily modifiable operator library [ggml](https://github.com/ggerganov/ggml) and execution runtime provided by [llama.cpp](https://github.com/ggerganov/llama.cpp). We also extend our gratitude to [THUNLP](https://nlp.csai.tsinghua.edu.cn/) for their support of ReLU-based sparse models. We also appreciate the research of [DejaVu](https://proceedings.mlr.press/v202/liu23am.html), which inspires PowerInfer.

+

-PowerInfer achieves up to 11x and 8x speedup for fp16 and int4 model!

+PowerInfer achieves up to 11x and 8x speedup for FP16 and INT4 model!

## TODOs

We will release the code and data in the following order, please stay tuned!

@@ -130,8 +130,8 @@ We will release the code and data in the following order, please stay tuned!

If you find PowerInfer useful or relevant to your project and research, please kindly cite our paper:

```bibtex

-Stay Tune

+Stay tuned!

```

## Acknowledgement

-We are thankful for the easily modifiable operator library [ggml](https://github.com/ggerganov/ggml) and execution runtime provided by [llama.cpp](https://github.com/ggerganov/llama.cpp). We also extend our gratitude to [THUNLP](https://nlp.csai.tsinghua.edu.cn/) for their support of ReLU-based sparse models. We also appreciate the research of [DejaVu](https://proceedings.mlr.press/v202/liu23am.html), which inspires PowerInfer.

\ No newline at end of file

+We are thankful for the easily modifiable operator library [ggml](https://github.com/ggerganov/ggml) and execution runtime provided by [llama.cpp](https://github.com/ggerganov/llama.cpp). We also extend our gratitude to [THUNLP](https://nlp.csai.tsinghua.edu.cn/) for their support of ReLU-based sparse models. We also appreciate the research of [DejaVu](https://proceedings.mlr.press/v202/liu23am.html), which inspires PowerInfer.

+

-

+

- +

-PowerInfer achieves up to 11x and 8x speedup for fp16 and int4 model!

+PowerInfer achieves up to 11x and 8x speedup for FP16 and INT4 model!

## TODOs

We will release the code and data in the following order, please stay tuned!

@@ -130,8 +130,8 @@ We will release the code and data in the following order, please stay tuned!

If you find PowerInfer useful or relevant to your project and research, please kindly cite our paper:

```bibtex

-Stay Tune

+Stay tuned!

```

## Acknowledgement

-We are thankful for the easily modifiable operator library [ggml](https://github.com/ggerganov/ggml) and execution runtime provided by [llama.cpp](https://github.com/ggerganov/llama.cpp). We also extend our gratitude to [THUNLP](https://nlp.csai.tsinghua.edu.cn/) for their support of ReLU-based sparse models. We also appreciate the research of [DejaVu](https://proceedings.mlr.press/v202/liu23am.html), which inspires PowerInfer.

\ No newline at end of file

+We are thankful for the easily modifiable operator library [ggml](https://github.com/ggerganov/ggml) and execution runtime provided by [llama.cpp](https://github.com/ggerganov/llama.cpp). We also extend our gratitude to [THUNLP](https://nlp.csai.tsinghua.edu.cn/) for their support of ReLU-based sparse models. We also appreciate the research of [DejaVu](https://proceedings.mlr.press/v202/liu23am.html), which inspires PowerInfer.

+

-PowerInfer achieves up to 11x and 8x speedup for fp16 and int4 model!

+PowerInfer achieves up to 11x and 8x speedup for FP16 and INT4 model!

## TODOs

We will release the code and data in the following order, please stay tuned!

@@ -130,8 +130,8 @@ We will release the code and data in the following order, please stay tuned!

If you find PowerInfer useful or relevant to your project and research, please kindly cite our paper:

```bibtex

-Stay Tune

+Stay tuned!

```

## Acknowledgement

-We are thankful for the easily modifiable operator library [ggml](https://github.com/ggerganov/ggml) and execution runtime provided by [llama.cpp](https://github.com/ggerganov/llama.cpp). We also extend our gratitude to [THUNLP](https://nlp.csai.tsinghua.edu.cn/) for their support of ReLU-based sparse models. We also appreciate the research of [DejaVu](https://proceedings.mlr.press/v202/liu23am.html), which inspires PowerInfer.

\ No newline at end of file

+We are thankful for the easily modifiable operator library [ggml](https://github.com/ggerganov/ggml) and execution runtime provided by [llama.cpp](https://github.com/ggerganov/llama.cpp). We also extend our gratitude to [THUNLP](https://nlp.csai.tsinghua.edu.cn/) for their support of ReLU-based sparse models. We also appreciate the research of [DejaVu](https://proceedings.mlr.press/v202/liu23am.html), which inspires PowerInfer.