- Thread count set equal to cpu_count() if it's < 6, otherwise set to cpu_count()-2 instead. This can be forcibly overwritten by the --threads parameter. Setting all threads=cpu_count() chokes my own PC and slows it down badly, so I'd rather make it optional. - Added localmodehost as a URL parameter in Kobold Lite instead, to avoid monkeypatching the embedded kobold lite directly. It should be parsed via ?localmodehost=(host). Also your updated klite file has the wrong encoding, it should be UTF-8, some of the symbols are incorrect such as the palette icon in settings. Repackaged the new version of Kobold Lite correctly with changes. - Reverting the TK GUI filedialog if no model is provided, because I want to keep it noob friendly for those who don't know how to use command line args. The file dialog only loads if there are no command line args. If command line args are present, the GUI will not trigger. - Modified the argparser to also take positional arguments for backwards compatibility, in addition to the optional argparse flags specified. - Your code does not work if embedded kobold is removed. The embedded KAI variable was not declared in the correct scope, and also Python f-string formatted variables cannot work with raw byte strings. You also have incorrect indentation when returning the response body - have corrected all the above but please do test all codepaths if possible. - There is a good reason to bind to "" (0.0.0.0) instead of a specific IP. It allows receiving requests from all routable interfaces. I don't know why you need an explicitly defined --host flag, but I will leave it there as an optional parameter, though the default should still be to accept from all interfaces. In that way, even if the displayed url is localhost, connecting via 192.168.x.x will also work, for example. |

||

|---|---|---|

| .github/ISSUE_TEMPLATE | ||

| examples | ||

| models | ||

| prompts | ||

| tests | ||

| .gitignore | ||

| convert-gptq-to-ggml.py | ||

| convert-pth-to-ggml.py | ||

| expose.cpp | ||

| extra.cpp | ||

| extra.h | ||

| ggml.c | ||

| ggml.h | ||

| klite.embd | ||

| LICENSE.md | ||

| llama.cpp | ||

| llama.h | ||

| llama_for_kobold.py | ||

| llamacpp.dll | ||

| main.exe | ||

| make_pyinstaller.bat | ||

| Makefile | ||

| MIT_LICENSE_GGML_LLAMACPP_ONLY | ||

| niko.ico | ||

| preview.png | ||

| quantize.exe | ||

| quantize.py | ||

| README.md | ||

| SHA256SUMS | ||

llama-for-kobold

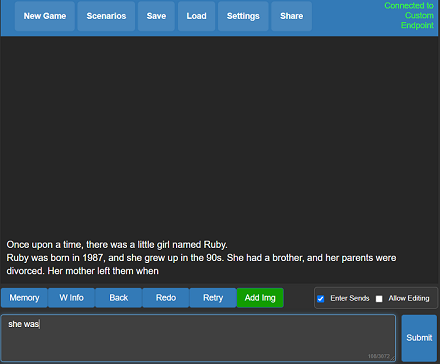

A self contained distributable from Concedo that exposes llama.cpp function bindings, allowing it to be used via a simulated Kobold API endpoint.

What does it mean? You get llama.cpp with a fancy UI, persistent stories, editing tools, save formats, memory, world info, author's note, characters, scenarios and everything Kobold and Kobold Lite have to offer. In a tiny package under 1 MB in size, excluding model weights.

Usage

- Download the latest release here or clone the repo.

- Windows binaries are provided in the form of llamacpp-for-kobold.exe, which is a pyinstaller wrapper for llamacpp.dll and llama-for-kobold.py. If you feel concerned, you may prefer to rebuild it yourself with the provided makefiles and scripts.

- Weights are not included, you can use the

quantize.exeto generate them from your official weight files (or download them from other places). - To run, execute llamacpp-for-kobold.exe or drag and drop your quantized ggml model.bin file onto the .exe, and then connect with Kobold or Kobold Lite.

- By default, you can connect to http://localhost:5001

Considerations

- Don't want to use pybind11 due to dependencies on MSVCC

- ZERO or MINIMAL changes as possible to main.cpp - do not move their function declarations elsewhere!

- Leave main.cpp UNTOUCHED, We want to be able to update the repo and pull any changes automatically.

- No dynamic memory allocation! Setup structs with FIXED (known) shapes and sizes for ALL output fields. Python will ALWAYS provide the memory, we just write to it.

- No external libraries or dependencies. That means no Flask, Pybind and whatever. All You Need Is Python.

License

- The original GGML library and llama.cpp by ggerganov are licensed under the MIT License

- However, Kobold Lite is licensed under the AGPL v3.0 License

- The provided python ctypes bindings in llamacpp.dll are also under the AGPL v3.0 License

Notes

- There is a fundamental flaw with llama.cpp, which causes generation delay to scale linearly with original prompt length. If you care, please contribute to this discussion which, if resolved, will actually make this viable.